最近看的小说(盗版网站)广告太多了,又想白嫖,于是写了个爬虫emmm(好吧是不想写实验来着

这份代码基于盗版小说网站https://www.26ksw.com/,但是盗版网站都差不都这样吧。。。

然后发现这个网站的这本书缺了两章似乎emmm

原理很简单,只不过bs4不太熟悉搞了好久。。

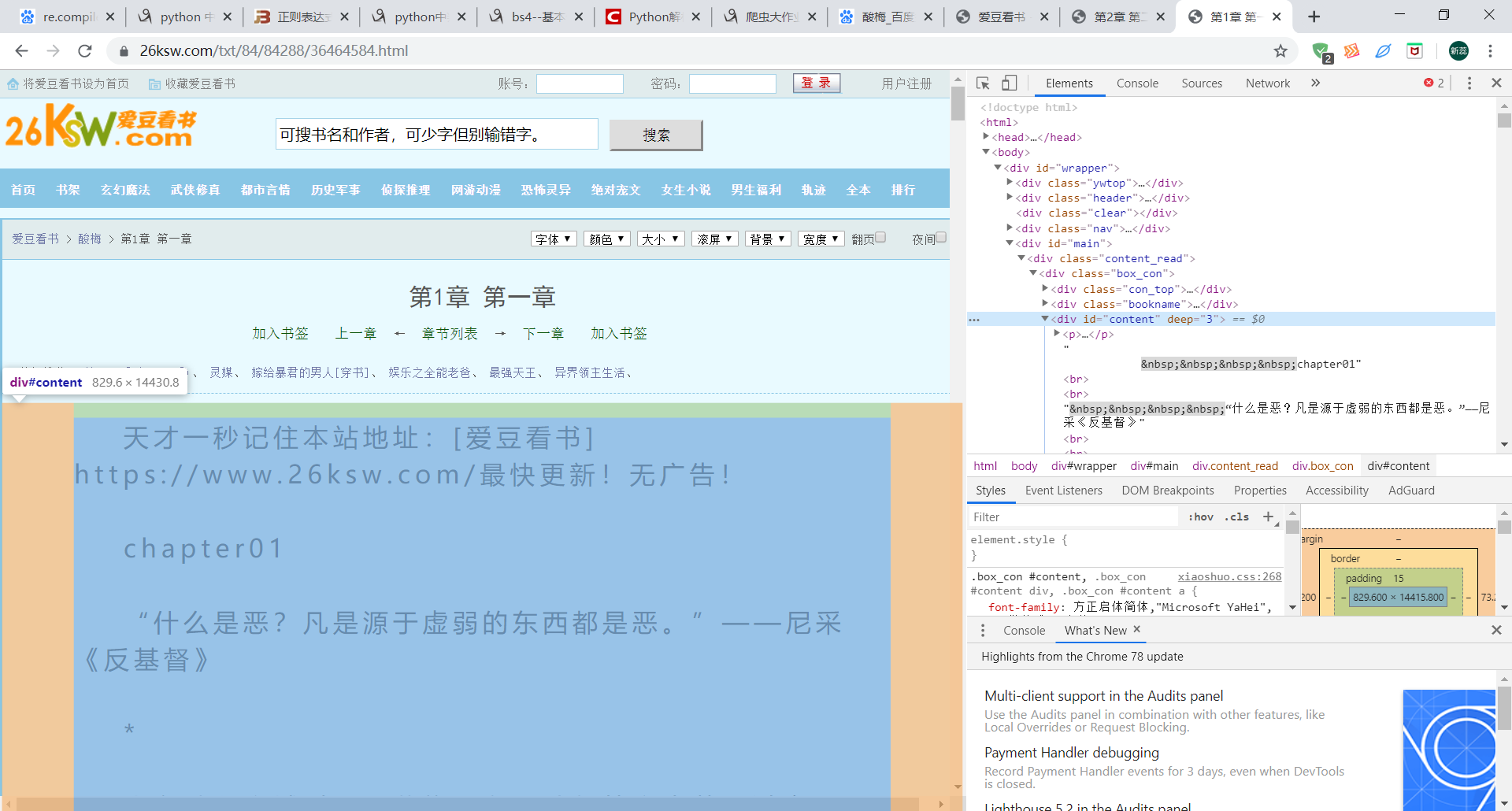

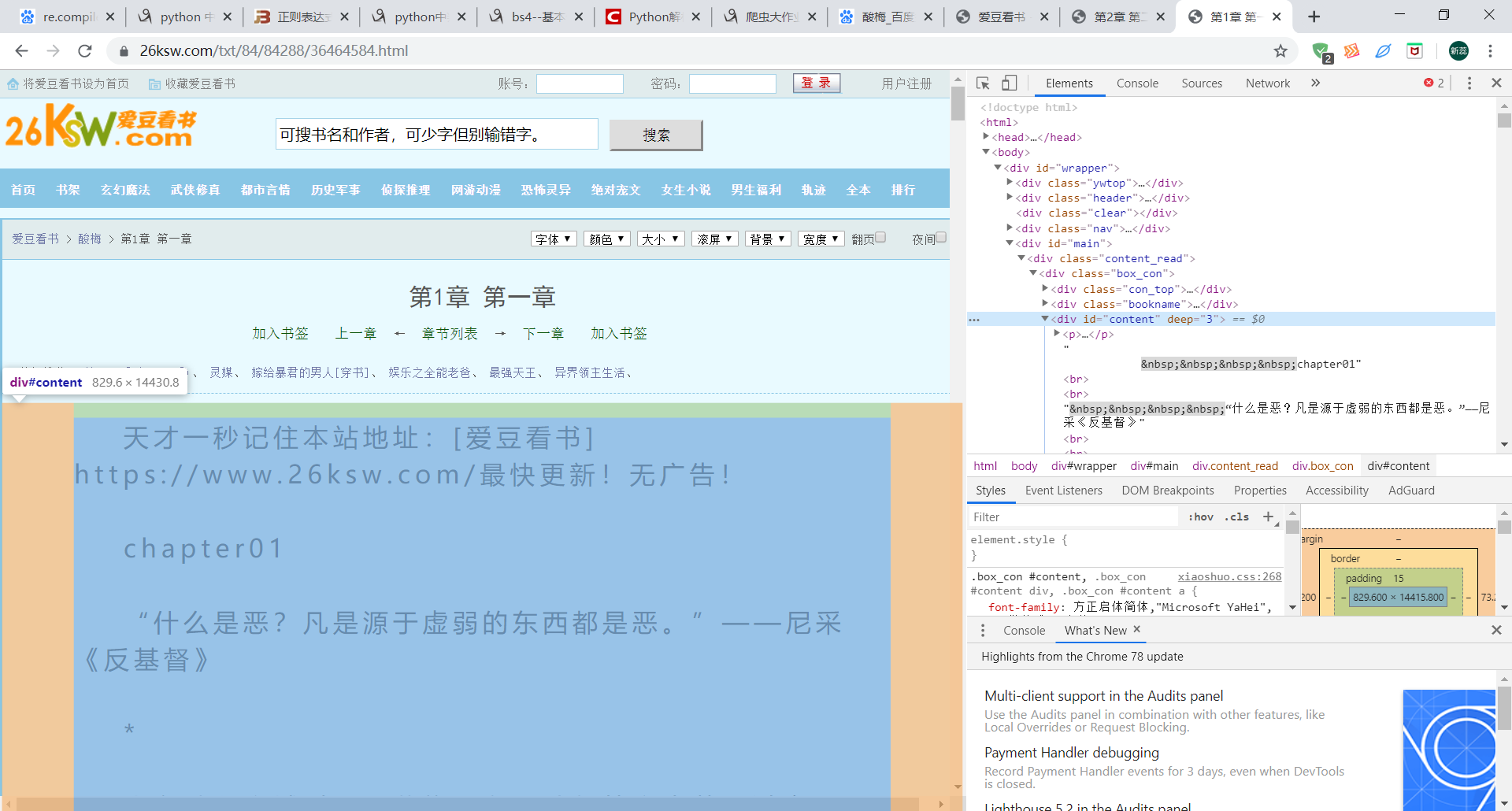

观察审查元素中Elements结构随便搞一搞。。

1 | # coding:utf-8 |

最近看的小说(盗版网站)广告太多了,又想白嫖,于是写了个爬虫emmm(好吧是不想写实验来着

这份代码基于盗版小说网站https://www.26ksw.com/,但是盗版网站都差不都这样吧。。。

然后发现这个网站的这本书缺了两章似乎emmm

原理很简单,只不过bs4不太熟悉搞了好久。。

观察审查元素中Elements结构随便搞一搞。。

1 | # coding:utf-8 |